Interview Code Challenge I: Bowling Scoring API

Challenge

A few years ago, applying to a job, I was given a code challenge that entailed building out an API for a bowling scoring service. “Optionally”, but encouraged, was building out a front end that uses it. You never know what you’ll be judged on, so I went ahead and threw together a front end as well.

At first, the tasks seems fairly trivial. You knock down some pins, and add them up, right?

Not exactly. If you’re like me, when you think back to experiences bowling a handful of times in your life, you remember a few times watching the scoreboard do something unexpected, or tell a player to go when it didn’t quite make sense, or watching old round values suddenly shift.

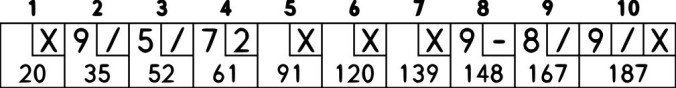

Then you look at a bowling scoresheet, and you realize, “hey, yeah, that’s kind of complicated looking for a scoresheet… what’s actually going on there?”

The odd thing about bowling scoring is that rolls in future frames (term for “rounds” in bowling) can in some cases be counted in previous frames as well as in their own frame. It ends up being an interesting choice for a code challenge because modelling and updating the data eloquently, in a way that is reliable and transparent, is tricky. At the “end” of any given frame, you sometimes won’t know the final score of that frame.

This results in some interesting possible scenarios. One classic one is that if you roll two strikes in a row, you (typically–it changes near the end of the game) have the values of three frames within the first strike’s frame.

As I thought about it, the crux of the issue ends up being this: how do you model a future-score, and how do you faithfully model the relationships between individual scores?

At the time, I was proud to have come up with using functions instead of just storing the score as actual numerical values. So, when you score a strike, your turn immediately ends, and you assign the next two rolls in that frame to functions that point to the next two rolls in the following frame.

Any time you call those functions, they will simply return the output of calling the functions of the next frame. If you roll yet another strike, that second roll function in the second frame will point to the a roll in the third frame.

That’s getting hard to follow, isn’t it? Let’s look at this example in code:

1 | // please forgive the liberties taken to have readable+runnable psuedo-code :) |

The net effect is that the value of frame_3’s roll_1 is counted a total 3 different times, in 3 different frames–yet as the game progresses, there’s no messy go-back-and-edit logic needed upon the third strike, to go add values into the previous two frames upon that roll. Not to mention how messy updating a previous value would be in such a scenario!

Intead, we just have a clean, consistent, simple action. Any time we have a roll, we update one function. Any time we want to get a value, it’s always the same thing: call the function of that roll. Relational links between values are preserved and reflected at all times, making the data model resilient and transparent.

I will never forget my the pride I felt showing my code to the room of engineers, and having the lead JS guy excitedly tell me that when he had done this challenge himself, he really struggled with this problem, and he felt like my method was exactly what he wished he had come up with.

Bug-free by Brute-Force

Testing wasn’t my forte at the time, but I also took great pride in hearing that they could not find a single bug in the scoring system. I had nailed it. A year later, I was privvy to the fact that that was pretty rare for candidates. How had I managed it?

Not through eloquent code that was so simple it couldn’t be wrong, alas. Nor through TDD. While the data model laid out above helped a great deal, it was in large part through brute-force personal testing and intuition. Aside from trying to break it myself directly, I also wrote a quick-and-dirty randomizer with jQuery selectors I would run in the console and just watch for mistakes.

Unfortunately, the code from back then shows evidence of that style. I tried to make it as readable as I could manage, but my methedology led to lists of conditionals checking for specific edge-cases. I compensated as best I could with careful commenting, but the absolute need for comments here was a form of code smell, I now see.

At the time I felt like the complex server model file was justified, because it was just a complex problem. And I felt like my lack of testing was justified because the time limit was so short.

While in a sense, given a tight deadline and my relative inexperience with testing at the time, it may have been wise to not dive headfirst into a methedology I wasn’t familiar with, I think I’ve grown as a programmer since then. This problem is a prime candidate for TDD. Those tests would also encourage writing smaller functions, and would likely have led to a less chaotic code situation–and meant less time wasted watching a randomizer loop.

Real RESTful-ness

I was told to write the server as a “REST” API. Of course, that term is heavily abused. I wrote up what I thought was a nice enough looking API, and it would have worked just fine. But as a relative novice at the time, I started doubting myself–would it meet their standards?

When you dig deeper into REST, you end up going down a Ph.D level rabbit hole–it turns out REST is a term coined in a doctoral dissertation on the topic.

Martin Fowler’s classic article on the subject had a profound effect on me, and I ended up writing new endpoints based around what I learned. I strived for a level 3, truly RESTful server, and I was proud of the result. The endpoints used verbs correctly, PUT requests were idempotent, GET requests were safe and cacheable and directed to URLs that reflected resources, returned meaningful and useful response codes. Best of all, the API implemented HATEOAS, guiding the client and informing its next natural step within a game. The consumer of the API need hardly learn the API, as the constructed response is essentially provided for them–all they need to do is fill in the blank with the next roll’s value and send it along.

This last point is an especially nice feature I was proud of. This was primarily a challenge regarding the API, and I think that was one of the high points of my attempt. Using this design, clients need not calculate even whose turn it is. Instead, the API tells you what’s next. These are examples of level III criteria on the Richardson Maturity Model.

This made the API more powerful, and it also made working with the API easier. As a result, when I decided to start working on the client, I found it incredibly straightforward.

The Client

While the client really was written as a stretch goal on the last little bit of the deadline, I still managed to throw something reasonably functional (if not beautiful) together. Looking back on it now, the client is obviously hastily produced, but it relies on fundamentally solid principles. It’s fairly transparent to read through, for the most part. A couple hours refactoring it and I think it would really shine. The site uses Bootstrap’s grid to basic effect to create minimal but sufficient mobile-responsiveness.

All the heavy lifting for the dynamic functionality is really through leveraging Mithril.js, even in the 0.2 incarnation I used at the time. Because of the repeating elements and the need for dynamic view updating, and because I knew they were using React at the company I was applying to (similar paradigm), I knew Mithril would be a great choice. It was.

I knew they’d be testing it themselves, and knew the UI wasn’t very streamlined, so I added my little jQuery script I’d used for internal testing onto a button I added labelled “randomize”, to help them out. I also included a “pause” button that just refreshed the page (the game state was maintained).

Comically, they of course commented on the shortcomings of that button as a flaw. (It was just a crude setInterval, so at the end of a game, it would keep trying to move forward, failing infinitely, if you had the console open to watch.) I had assumed they would understand I just threw it in for their convenience, but a bug is a bug. I took the lesson that I should either polish it or just not include it at all.

Years later, I still haven’t forgotten that. So, while writing this post, I actually finally went back and cleaned up that functionality–didn’t want future readers making the same judgement!

One Last Thing To Clean Up

What should the API do if it is told to score an impossibly high amount of pins (e.g., roll 5, and then roll 6)? There are two options: be strict (respond with errors), or be permissive (do your best with what you’re given).

As an API, validation obviously cannot be left to the client. While the strict option maximizes clarity and is certainly the right way to go long-term, it would have required adding more error handling and retry logic in the client, logic to prevent hitting that error state, and handling the case of that error state. This was a 48-hour project, so avoiding those time-sucks was ideal if reasonably feasible.

The permissive route, instead, just required that I do one thing: when scoring, check if the pins claimed to have been rolled ended up being greater than ten. If so, lower it to the max it could have been (e.g., roll 5, then roll 6? They must have meant 5.)

While that was adequate at the time (no one complained, no bugs resulted), I really didn’t like that the client interface gave the appearance that it would allow scores that were impossible, relying on silent correction from the API instead.

So, also while writing this post, I spent two or three hours and dug through my code and implemented an extension to the API–it now determines in advance what the maximum possible pins the next roll could be, and makes that information available to the API consumer. Since the API already delivers information about who the next player is, what round they’re now on, what roll they’re on, etc., it was easy to piggy-back this information into that interface.

In the client, the Mithril.js code was trivially easy to update so as to dynamically modify the UI to only allow up to the maximum possible score to be available.

It was certainly a little painful to work on the server code without tests. I felt that lesson driven home again. But I was pleased that the code I wrote was, even back then, sane enough that I could just pick it up and read it now and dive in and edit. And, surprisingly, except for a handful of tweaks, it ran correctly with no bugs almost right away.

(…At least, no bugs that I noticed. I did make use of that randomizer I had just upgraded, but… without proper testing, who knows! ;) )

Conclusion

So how did I do?

Pros

In the end, I tackled the problem of how to model the data, implemented a mature RESTful API, created a bug-free scoring engine, and included a functional and dynamic front-end. The code could be cleaner, but it’s still transparent enough for me to pick up years later and add features to the front and back end without serious hiccups. Work to be proud of!

Cons

On the other hand, I can see now that it was valid to critique the lack of tests, and probably turn a sideways eye the resulting convoluted conditional statements that underlaid the scoring engine, as well. Had tests been relied upon, the code would have likely have been cleaner and more maintainable. That would have been the mark of a more experienced programmer–those were lessons I later learned at that job.

Result

I still think I’d give it an 8/10. As for them? The closest thing I got to a score from them was getting hired, so I guess I’ll take that as a pass.

If you ever visit a vintage bowling lane and don’t know how to track the score by hand, or need an interesting alternative to a coin flip, feel free to bring up zebrabowling.kylebaker.io on your phone. ;)